#9 Why Healthcare AI Needs Eyes for Children’s Health

The FDA has now cleared a substantial and rapidly growing number of AI-enabled medical devices. Billions have been invested in digitizing healthcare data from standardizing EHRs, building data warehouses, curating multi-modal datasets and beyond. And yet most clinical AI models still fail to generalize. The reason is because of a fundamental divide: AI has succeeded in highly "structured" fields like radiology and genetics, where data follows rigid protocols. But at the bedside, we are hitting a performance ceiling because our data is "unstructured" and reductive.The scales we use to document clinical impressions compress complex human behavior into a single number. The notes we write are incomplete. We are building AI on imperfect representations of patient state, missing the very signals clinicians rely on most.

Nowhere is this gap more consequential than in the care of our most vulnerable patients.

The Problem

Over 1.3 million infants are admitted to NICUs in affluent countries every year. All of them undergo cardiorespiratory monitoring, heart rate, oxygen saturation, blood pressure, allowing clinical practitioners to respond quickly to hemodynamic changes. But there is no accessible, scalable equivalent for neurologic monitoring, despite neurologic injury having the greatest impact on long-term neurodevelopmental outcomes.

Instead, clinicians assess neurologic status the way they always have: by watching the baby. An infant’s level of alertness, such as how they move, how they respond to stimuli, how their tone changes over time, is the most sensitive indicator of neurologic integrity. Nurses and providers perceive these signs constantly (yet intermittently), but the richness of that observation is lost the moment it is compressed into a single nursing note. And between assessments, no one is watching.

EEG can provide continuous neurologic data, but it requires specialized equipment and expertise available at only a minority of NICUs. Even where EEG is available, it has blind spots: there is no reliable association between EEG and depth of sedation in neonates. The clinical reality is that for most infants, neurologic monitoring depends on intermittent human observation - subjective, variable, and impossible to scale.

What If We Could Teach a Camera to Perceive What Clinical Practitioners Perceive?

Advances in computer vision, driven largely by industry investment in research labs, autonomous vehicles, augmented reality, and consumer devices, have produced “foundation models” that can now be adapted for clinical use. We asked a simple question: can video alone, captured at the bedside, provide the kind of continuous neurologic monitoring that the NICU currently lacks?

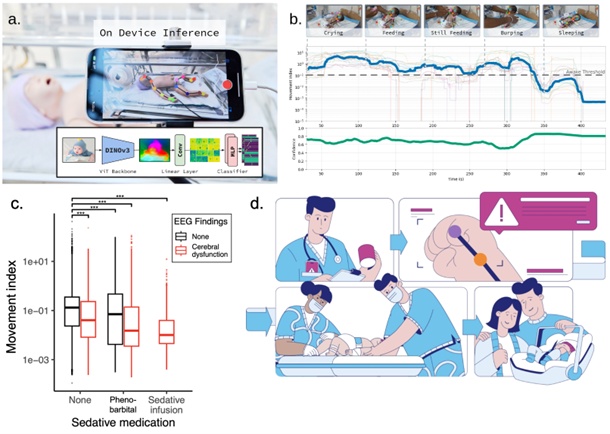

Our answer is NeoPose. NeoPose is a computer vision system that uses AI pose estimation to continuously track an infant’s movement from a standard video stream (PMID 39764545). It maps anatomic landmarks frame by frame, a digital analog to the neurologic exam, and derives a continuous movement index that correlates with key neurologic changes.

NeoPose is the first device to identify cerebral dysfunction without EEG or neuroimaging (ROC-AUCs 0.76–0.91) and the first non-invasive sedation and pain monitor for neonates (ROC-AUCs 0.87–0.91). It performs consistently across race, ethnicity, sex, and neurologic pathology. We recently enhanced it with Vision Image Transformer architectures, the same technology family underlying tools like ChatGPT, achieving a six-fold improvement in accuracy. And we built it to run on an iPhone, processing video at the bedside so that no patient data ever leaves the hospital’s firewall.

We are now preparing for the first prospective deployment of video AI in neonatal care: a multi-site silent clinical trial with an FDA Breakthrough Device pathway in view.

Why This Matters Beyond the NICU

The principle behind NeoPose, that video can capture clinically meaningful information that no existing sensor or data field records, extends well beyond neonatal care. Researchers have used video to detect seizures at home, screen for cerebral palsy, assess postoperative pain, monitor sleep-wake states, and analyze caregiver-child interactions. We have already demonstrated that NeoPose’s movement-based approach can track sedation and consciousness in adult neuro-ICU patients (PMID 41532764). The potential applications span telehealth, developmental follow-up, rare disease detection, and home monitoring. Beyond neurologic monitoring, AI analysis of videos may be used to quantify other common clinical scenarios, like work of breathing and feeding intolerance, and may unlock a host of operational tools, like more precise hourly critical care billing and monitoring harm prevention bundles.

But unlocking this potential means confronting a hard truth: video is healthcare’s most sensitive data. It can be de-identified, but the margin for error is razor-thin: a single unblurred frame can undo everything. Consumer devices like iPhones, while powerful, were not built for healthcare and require rigorous lockdown to prevent patient data from reaching cloud services. And our EHR systems were never designed to store, display, or integrate visual intelligence.

These are solvable problems, but they require proactive governance, from researchers, IRBs, regulators, and industry, before the technology outpaces oversight.

Looking Ahead

The next breakthrough in clinical AI will not come from bigger models trained on more routinely collected EHR data and affiliated modalities. It will come from capturing what clinicians have always relied on but never systematically digitized: what they see at the bedside. For the most vulnerable patients, the infants in our NICUs, that future is already taking shape.

Figure 1: The NeoPose System for Continuous, Real-Time Neonatal Monitoring. (a)On-device inference and architecture: NeoPose utilizes a bedside smartphone for real-time computer vision analysis, ensuring privacy by processing all data locally. (b) Behavioral state tracking: Representative output quantifying common activities (e.g., crying, feeding, sleeping). The system tracks a continuous Movement Index (y-axis, log-scaled) against a validated Awake Threshold to assess neurological status, supported by a real-time Confidence score. (c) Retrospectively we found that decreases in our movement index were associated with sedation and cerebral dysfunction in infants in the NICU (shown, PMID 39764545) and consciousness in the adult neurosciences ICU (PMID 41532764). (d) Critical events are automatically captured on video to allow for expedient review, even of events not directly observed, thereby enabling a more rapid response and empowering clinical teams to deliver more timely, targeted care.

Supported by the National Center for Advancing Translational Sciences of the National Institutes of Health under Award Number UL1TR002378. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health.

Author

— by Benjamin Glicksberg, PhD, Associate Professor and Founding Director, Center for AI in Children’s Health, Icahn Mount Sinai, 3/2026

Continue the conversation! Please email us your comments to post on this blog. Enter the blog post # in your email Subject.